Don't stress, but Claude Code is outdated.

· AI · Alejandro Cantero Jódar

Claude Code is not outdated because it stopped being good.

It is outdated because the job it was designed for is becoming too small.

That sounds dramatic, so let me make the distinction clear: Claude Code, Codex, OpenCode, Cursor, and the rest of the current coding agents are still useful. They are probably more useful than most development tools we had before them. But the center of gravity is moving. The important unit of work is no longer a developer chatting with an agent inside a repo. The important unit of work is becoming a system that continuously takes work, implements it, validates it, and produces something a human can responsibly approve.

In other words, the chat interface is not the destination. It was the transition layer.

The December 2025 Shift

Around late 2025, something changed in day-to-day programming. The models became good enough, and the tools around them became mature enough, that you could give an agent a real request and leave it working for a while. Not just autocomplete. Not just “write this function.” Actual implementation work: inspect the codebase, edit several files, run checks, fix failures, and come back with a reasonable result.

That changed the psychology of programming.

Before that, AI coding was mostly interactive. You asked, it answered. You corrected, it adjusted. The human was still driving almost every step. With tools like Claude Code and Codex, the human started delegating a bigger chunk of the execution loop.

But once a tool can work for several minutes, inspect context, call commands, and recover from errors, the obvious question is: why is a human manually starting every task?

That is where the next layer begins.

The Ralph Loop

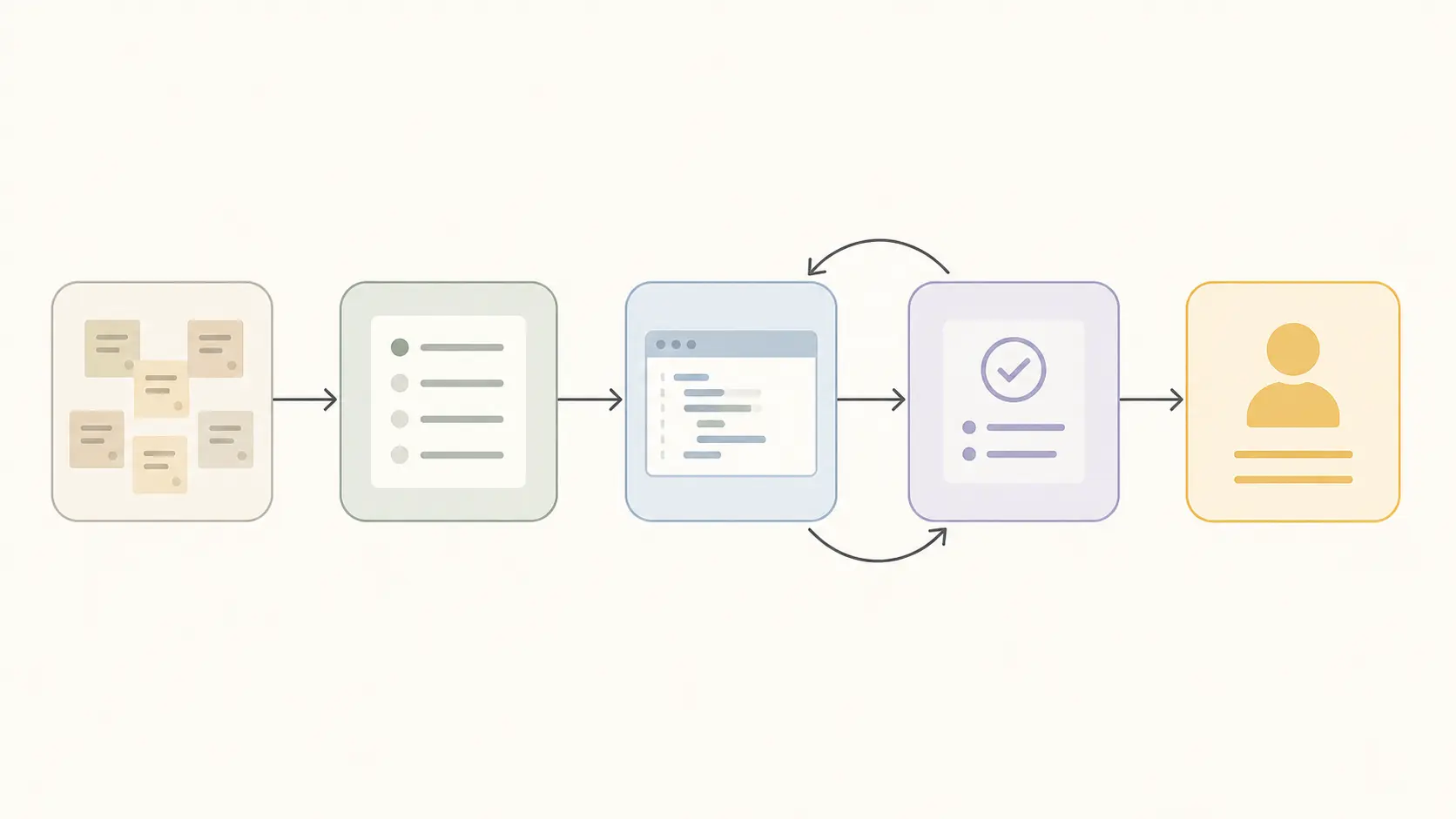

The pattern is simple:

task pool -> task picker -> implementer -> validation loop -> human reviewYou can call this agent orchestration, a software factory, or a Ralph Loop. The name matters less than the architecture.

A Ralph Loop is an autonomous delivery loop. It takes work from a task pool, chooses the next item, gives it to an implementation agent, validates the result, retries within limits, and produces a small, reviewable artifact for a human.

The implementation and validation steps are usually a loop of their own. The agent writes code, runs tests or checks, reads the failure, changes the code, and tries again. After some number of iterations, the loop either reaches an acceptable result or escalates.

The important part is not that the agent writes code. We already crossed that line.

The important part is that code is no longer the workflow.

Code becomes an artifact produced by the workflow.

Claude Code Moves Down the Stack

This is why I say Claude Code is outdated.

Not useless. Outdated as the main interface.

Claude Code is excellent when a human has a clear intention and wants to push that intention through a repository. But in a Ralph Loop, Claude Code is just one possible implementer. It becomes a worker inside a larger system, not the system itself.

The old flow looks like this:

- A human decides what to do.

- A human opens the coding agent.

- A human writes the prompt.

- The agent edits the code.

- A human reviews the result.

The new flow looks more like this:

- Work enters a task pool from product, support, monitoring, customer feedback, or engineering planning.

- A task picker selects work based on priority, context, risk, and available validation.

- An implementation agent creates a candidate change.

- A validation loop checks whether the change satisfies the task.

- A human reviews the final artifact and takes responsibility for shipping it.

That is a different product category.

The agent in the terminal is still useful, but the company does not want a better chat box forever. The company wants throughput, reliability, traceability, and reviewable output.

The Two New Bottlenecks

I wrote before that code is no longer the bottleneck . This is the continuation of that idea.

If code generation is cheap, the bottleneck moves to the edges of the loop.

The first bottleneck is the input: the task, the plan, the specification, the context, the definition of success.

The second bottleneck is the output: the final review, the accountability, the decision that this change is safe enough to ship.

Everything in the middle is getting automated aggressively.

That does not mean the middle is trivial. Implementation is still difficult, validation is still imperfect, and agents still make strange decisions. But the economic pressure is obvious. Companies will keep pushing the implementation and validation loop toward autonomy because that is where the speed is.

The uncomfortable part is that the two bottlenecks left behind are also the two places where responsibility lives.

If the task is bad, the loop will produce the wrong thing faster.

If the review is weak, the loop will ship risk faster.

This is why fully automating the plan is dangerous. You can let an agent draft plans, split work, summarize context, and propose acceptance criteria. But if nobody owns the intention behind the task, the process becomes less responsible. The machine can produce motion without judgment.

And judgment is the scarce part now.

The New Role of Chat-Based Tools

So where do tools like Claude Code and Codex go?

They move toward planning, investigation, review, and general work.

That is already happening. These products are becoming less like “a coding interface” and more like a general-purpose coworker. The same assistant that edits files can also read documents, compare options, summarize incidents, prepare plans, inspect logs, and review a pull request.

That makes sense. If the raw implementation loop becomes increasingly automated, the human-facing interface should move closer to the places where humans still matter:

- Deciding what should be done.

- Explaining why it matters.

- Defining what success looks like.

- Reviewing what changed.

- Accepting responsibility for the result.

The best use of these tools may not be “write the code for me.” It may be “help me produce a task that a delivery loop can execute safely” and “help me review the artifact before I approve it.”

That is a very different mental model.

The Products Are Already Converging

You can see this pattern in projects like OpenAI Symphony , Sandcastle , and Vibe Kanban .

They have different interfaces, different assumptions, and different levels of ambition. But underneath, they are all circling the same architecture: collect tasks, assign them to agents, let those agents work in isolated environments, validate the result, and present something reviewable.

That is the Ralph Loop shape.

Some products will look like kanban boards. Some will look like CI pipelines. Some will look like internal platforms. Some will disappear into GitHub, Linear, Slack, or whatever tool already owns the company’s workflow.

But the direction is clear: the next productivity gain is not a smarter prompt box. It is an unattended system that can keep moving while humans are not staring at it.

The Zoom-Out: AI-Native Companies

This is not only about code.

Y Combinator has been talking about AI-native companies as organizations built around closed loops: processes that capture information, feed it back into intelligent systems, and improve over time.

That framing matters because engineering is just the easiest place to see the pattern. Code has tests, repositories, diffs, build logs, and deployment history. It is naturally artifact-rich. That makes it a good early target for autonomous loops.

But the same idea applies elsewhere.

Customer feedback can become a loop. Sales calls can become a loop. Support tickets can become a loop. Hiring can become a loop. Product planning can become a loop.

The company becomes more queryable. Decisions leave artifacts. Work is less dependent on human middleware passing information from one meeting to another. Instead of asking a manager for a lossy status summary, an agent can inspect tickets, pull requests, customer complaints, release notes, metrics, and conversations.

That is the real shift. AI is not just a productivity booster inside existing workflows. It pushes companies to redesign the workflow itself.

For startups, this is an advantage. They do not have to unwind years of process. They can build around loops from day one. For larger companies, it is harder because every process already has owners, politics, legacy systems, and a reasonable fear of breaking something that works.

But the direction is the same for both: make the organization legible to machines, then put humans at the points where judgment and accountability actually matter.

What Developers Should Do

If you are an individual developer, the lesson is not “stop using Claude Code.” That would be silly.

The lesson is: stop optimizing only for better prompts.

Prompting is useful, but it is not enough. The valuable skill is designing loops:

- Where does the task come from?

- What context does the agent need?

- What is the smallest safe unit of work?

- What validations can run automatically?

- When should the loop stop retrying?

- What artifact should the human review?

- Who is responsible if it ships and breaks production?

Those questions are more important than whether your prompt says “think step by step.”

Developers who understand this will become more valuable, not less. They will know how to turn vague work into executable tasks, how to constrain agents, how to build validation harnesses, how to review machine output, and how to keep responsibility attached to a human decision.

That is the job moving upward.

What Companies Should Do

If you are a company, the lesson is not “buy more AI tools.”

The lesson is: design the intake and review layers before pretending you have an autonomous engineering system.

A task pool full of vague tickets will produce vague output. A validation loop with weak checks will produce confident garbage. A review process where humans rubber-stamp large diffs will create the illusion of speed until the first serious incident.

The right question is not “how do we make agents write more code?”

The right questions are:

- Which tasks are safe for unattended implementation?

- Which tasks require a human to define the plan first?

- Which checks are deterministic enough to trust?

- Which changes should never be merged without domain review?

- How do we keep the final artifact small enough to review properly?

Companies that answer those questions will get real leverage. Companies that skip them will just automate chaos.

Don’t Stress

Claude Code being outdated is not bad news.

It means the tool worked so well that it helped reveal the next bottleneck.

First, AI made code generation cheap. Then it made implementation loops semi-autonomous. Now it is forcing us to care about task definition, validation, review, and accountability.

That is not the end of developers. It is the end of pretending that the main work is typing code into an editor.

The future is not a human asking a chat box to make changes all day.

The future is humans designing the loops, defining the intent, reviewing the output, and taking responsibility for what ships.

Claude Code can still be part of that future.

It just will not be the center of it.